Improve pipeline_stable_diffusion_inpaint_legacy.py#1585

Merged

patrickvonplaten merged 2 commits intohuggingface:mainfrom Dec 15, 2022

Merged

Improve pipeline_stable_diffusion_inpaint_legacy.py#1585patrickvonplaten merged 2 commits intohuggingface:mainfrom

patrickvonplaten merged 2 commits intohuggingface:mainfrom

Conversation

…t intermediate diffused images

|

The documentation is not available anymore as the PR was closed or merged. |

|

I tried to apply your suggested settings to the Onnx Legacy Inpainting Pipeline but the noise_pred_uncond isn't defined until after the noise is generated.

Any thoughts on adding this to Onnx Legacy Inpainting Pipeline as well? pipeline_onnx_stable_diffusion_inpaint_legacy.py Line 238 Line 270 Line 378 |

Contributor

Author

|

The line to change is 417-419 when defining |

Contributor

Author

|

Code change is similar to PR #1583 - Linking so both are reviewed at the same time. |

31 tasks

…sion_inpaint_legacy.py Co-authored-by: Patrick von Platen <patrick.v.platen@gmail.com>

patrickvonplaten

approved these changes

Dec 15, 2022

Contributor

patrickvonplaten

left a comment

patrickvonplaten

left a comment

There was a problem hiding this comment.

Thanks for the PR!

sliard

pushed a commit

to sliard/diffusers

that referenced

this pull request

Dec 21, 2022

* update inpaint_legacy to allow the use of predicted noise to construct intermediate diffused images * Update src/diffusers/pipelines/stable_diffusion/pipeline_stable_diffusion_inpaint_legacy.py Co-authored-by: Patrick von Platen <patrick.v.platen@gmail.com> Co-authored-by: Patrick von Platen <patrick.v.platen@gmail.com>

yoonseokjin

pushed a commit

to yoonseokjin/diffusers

that referenced

this pull request

Dec 25, 2023

* update inpaint_legacy to allow the use of predicted noise to construct intermediate diffused images * Update src/diffusers/pipelines/stable_diffusion/pipeline_stable_diffusion_inpaint_legacy.py Co-authored-by: Patrick von Platen <patrick.v.platen@gmail.com> Co-authored-by: Patrick von Platen <patrick.v.platen@gmail.com>

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

Sign up for free

to join this conversation on GitHub.

Already have an account?

Sign in to comment

Add this suggestion to a batch that can be applied as a single commit.This suggestion is invalid because no changes were made to the code.Suggestions cannot be applied while the pull request is closed.Suggestions cannot be applied while viewing a subset of changes.Only one suggestion per line can be applied in a batch.Add this suggestion to a batch that can be applied as a single commit.Applying suggestions on deleted lines is not supported.You must change the existing code in this line in order to create a valid suggestion.Outdated suggestions cannot be applied.This suggestion has been applied or marked resolved.Suggestions cannot be applied from pending reviews.Suggestions cannot be applied on multi-line comments.Suggestions cannot be applied while the pull request is queued to merge.Suggestion cannot be applied right now. Please check back later.

This is basically a one-line change to the file

pipeline_stable_diffusion_inpaint_legacy.py.I allow the possibility of replacing

init_latents_proper = self.scheduler.add_noise(init_latents_orig, noise, torch.tensor([t]))by

init_latents_proper = self.scheduler.add_noise(init_latents_orig, noise_pred_uncond, torch.tensor([t]))with the argument

add_predicted_noisenow default toTrue(i.e., use the latter option by default).(Maybe it is better to keep the default argument as

False, and maybe we can find a better name for this argument.)What I am doing here is that I use the unconditionally predicted noise instead of the initial noise to create the diffused samples used in the reverse diffusion process, and as argued in this paper this leads to more coherent result.

It turns out however that I did fail two of the three tests when I run

tests/pipelines/stable_diffusion/test_stable_diffusion_inpaint_legacy.py. These are assertions errorsAssertionError: assert 0.012784755849838236 < 0.01andAssertionError: assert 0.01317704176902773 < 0.01.I doubt this is a natural consequence of the change of the algorithm and as the difference is small I don't think that is a severe problem.

Anyway, the difference in inpainted result is very pronouncing.

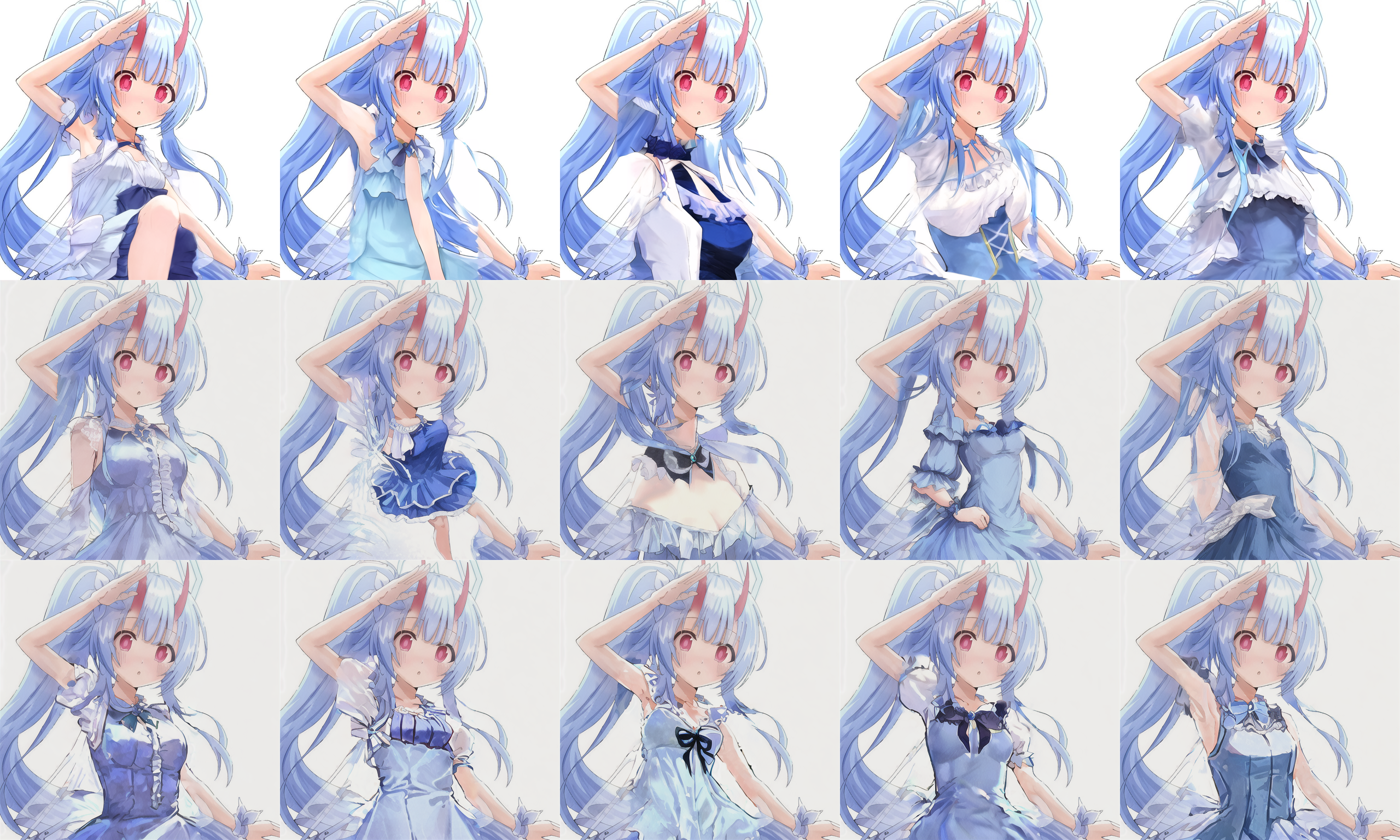

In the following, I compare results from Automatic1111 webui DDIM inpainting (top row), the original inpaint_legacy script (middle row), and the modified inpaint_legacy script (bottom row). For the latter two I use the same random seeds.

Denoising strength is set to 1 so that the masked area are completely ignored

Example 1

model: SD 1.4

cfg: 10

Input:

Prompt: guinea pig

Example 2

model: Linaqruf/anything-v3.0

cfg: 7.5

Input:

Prompt: anime girl with blue hair in dress